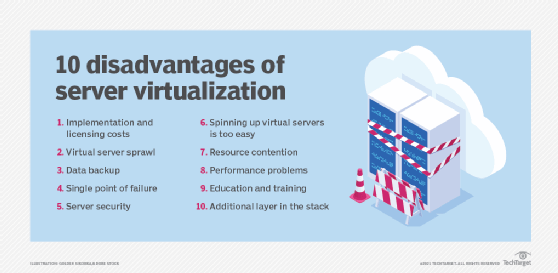

Top 10 disadvantages of server virtualization

Server virtualization is lauded for its flexibility, improved productivity and more efficient resource provisioning, but the technology is not without its drawbacks.

Server virtualization has become a dominant technology in most data centers because it addresses cost, scalability, and administration and management issues, while effectively consolidating data center hardware resources. Organizations are traditionally slow to introduce newer technologies that affect core operations, given data center complexity and the often enormous investments it represents. But IT administrators can implement server virtualization incrementally, mitigating any negative effects.

A virtual environment can help produce an array of benefits, including the following:

- lower hardware acquisition costs;

- reduced energy consumption;

- more reliable disaster recovery;

- expedited application development; and

- easy and less expensive ways to run different types of server OSes.

A degree of caution is advised when launching a server virtualization project in pursuit of those benefits, as there are several drawbacks that could undermine or at least hinder the virtualization effort. By understanding some of the potential pitfalls, the process is likely to yield fewer surprises and disruptions to data center operations. The following list of server virtualization disadvantages isn't intended to dissuade any organization from proceeding with virtualization; rather, it's meant to increase awareness so some of these stumbling blocks can be avoided.

1. Implementation and licensing costs

One of the most touted benefits of server virtualization is cost savings. Those savings are realized primarily through a reduction in hardware acquisitions. On the software side, overall expenditures are likely to rise with new costs for the hypervisors that enable virtualization. Even if the virtualization software is open source or included with a server OS, there might be additional support and maintenance fees. Also, new management software is required that specifically addresses the virtualized environment. And, because virtualization typically increases the overall number of servers in use, additional OS licenses will be required.

2. Virtual server sprawl

Although one of the key goals of server virtualization is to limit the number of physical servers, it often results in having more virtual servers than had been deployed previously. As the number of VMs increases, other components in the IT ecosystem -- notably, storage and networking -- will be affected by the added capacity.

3. Data backup

Backing up active data gets tougher in a virtualized environment because there are more servers, applications and data stores to keep track of. Because virtual servers can be easily spun up and down, it's critical that the backup app can ensure that all relevant business data is copied to backup media. Most modern backup applications have virtualization features, but you must confirm that those capabilities match up well with your environment. Also, with more active servers, it might take more time to back up the additional data.

4. Spinning up virtual servers is too easy

Virtual servers are much easier to configure and launch than conventional physical servers -- that's a benefit, of course, but it can also be a problem. If allowed access, users with even limited technical expertise can spin up a new VM, maybe even without the knowledge of the sys admin. This can cause multiple problems, including spiraling OS licensing costs, untracked and unmonitored virtual servers, and possible regulatory compliance issues.

5. Single point of failure

The ability to run many servers on one piece of hardware is one of virtualization's most tangible benefits, but it also creates a single point of failure. If the physical server hosting the virtual servers fails, it results in the loss of a large chunk of data center operations. Single point of failure also applies to the storage system supporting the virtual servers; if several VMs are using the same RAID array and it fails, data might be lost in addition to the interruption of service. Clustering virtual and physical servers might provide enough support to overcome a hardware failure.

6. Server security

Server security is always a challenge, but it becomes even more complex when protecting virtual servers. The difficulty is predominantly related to the number of virtual servers in an environment and the volatility of their lifespans as they can be spun up and killed so easily. Most modern security applications are VM-aware and are smart enough to provide security for all the known virtual servers' data stores. A good security program should either provide an up-to-date inventory of virtual servers or work with a virtualization management app that can provide that information.

7. Resource contention

Although admins can make resource allocation adjustments to each virtual server, if one of those VMs is overtaxed, it might affect others running on the same physical server. If contention for resources, such as CPU cycles, memory and bandwidth is a persistent problem, more powerful hardware might be required to host multiple VMs simultaneously.

8. Performance problems

Resource contention might be a cause for poor performance, but even with adequate resources, some workloads might not perform as well on a VM as they did when they ran on a dedicated hardware server. Another performance problem could arise if the hardware isn't fully compatible with the hypervisor, although that would tend to occur more often with older server or networking hardware.

9. Additional layer in the stack

Server virtualization installs a hypervisor platform over the physical server's OS, which enables the creation and support of virtual servers. This arrangement adds another layer to the software stack between the applications that the VMs host and the hardware resources that they require. The added layer could affect performance and requires additional drivers that must be updated periodically.

10. Education and training

Along with server virtualization come new processes, methodologies and tools to manage the new environment. These changes can be profound and require training for current IT staff on managing virtual servers and on using the new management tools that the virtualized infrastructure requires. Most IT pros will adapt easily, but it does require budgeting some time and money for education.